01 · Research focus

Modeling physical worlds across space, modality, and time.

Spatial Intelligence

Learning structured 3D/4D representations for scenes, agents, motion, and geometry in dynamic physical environments.

Multimodal Foundation Model

Connecting vision, language, temporal signals, and spatial context for generalizable physical-world understanding.

World Model

Modeling how scenes evolve, how agents move, and how actions may change the physical world.

02 · Updates

Recent signals

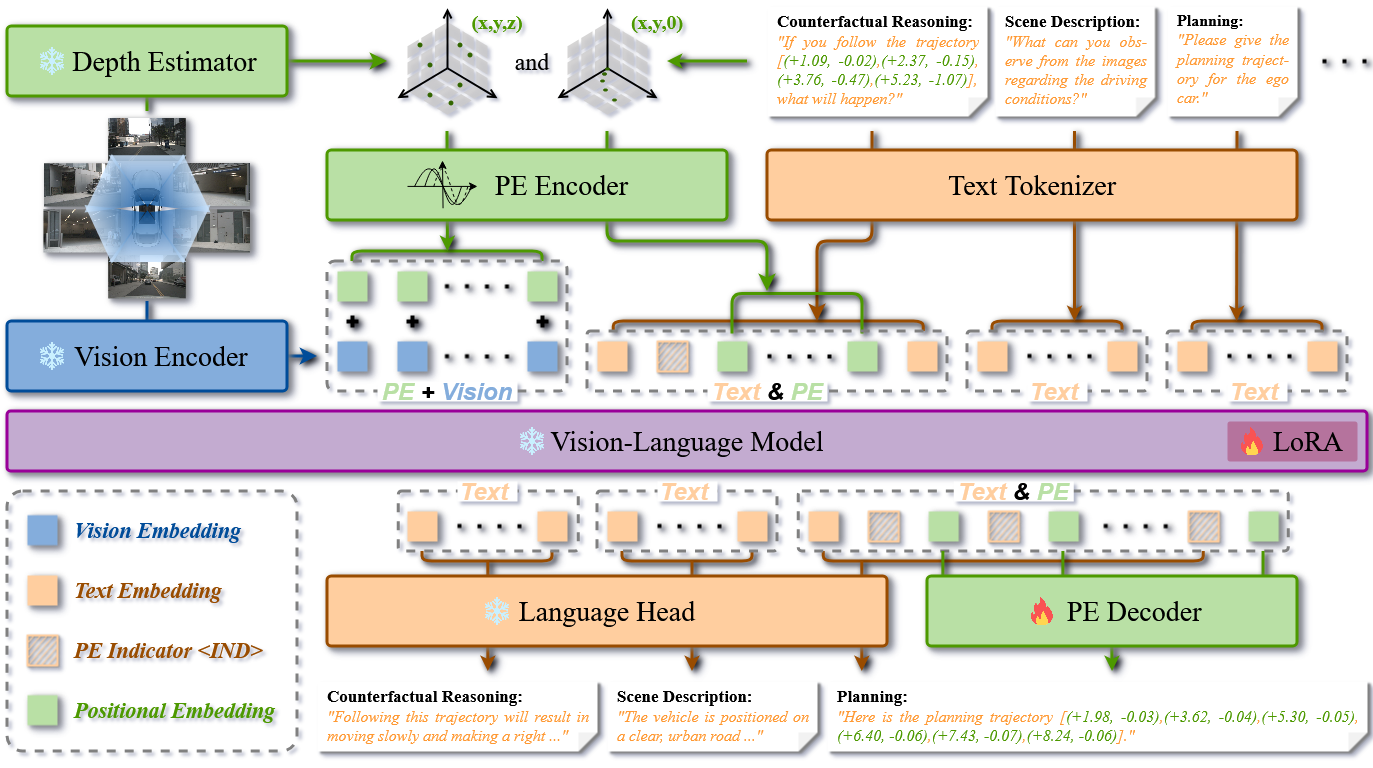

| Feb 26, 2026 | Our SpaceDrive: Infusing Spatial Awareness into VLM-based Autonomous Driving paper is accepted by CVPR 2026. 🎉 The 1st ranking on nuScenes benchmark and 2nd best close-loop performance on Bench2Drive leaderboard! |

|---|---|

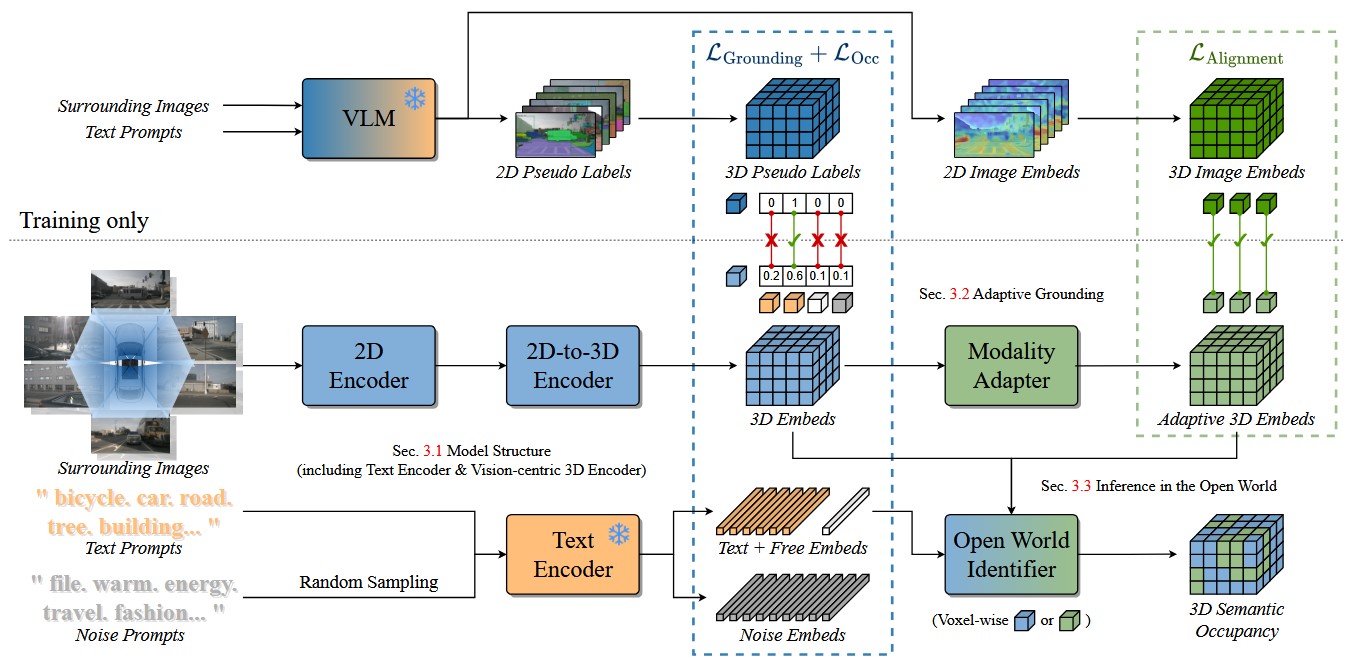

| Jun 25, 2025 | Our AGO: Adaptive Grounding for Open World 3D Occupancy Prediction paper is accepted by ICCV 2025. 🎉 |

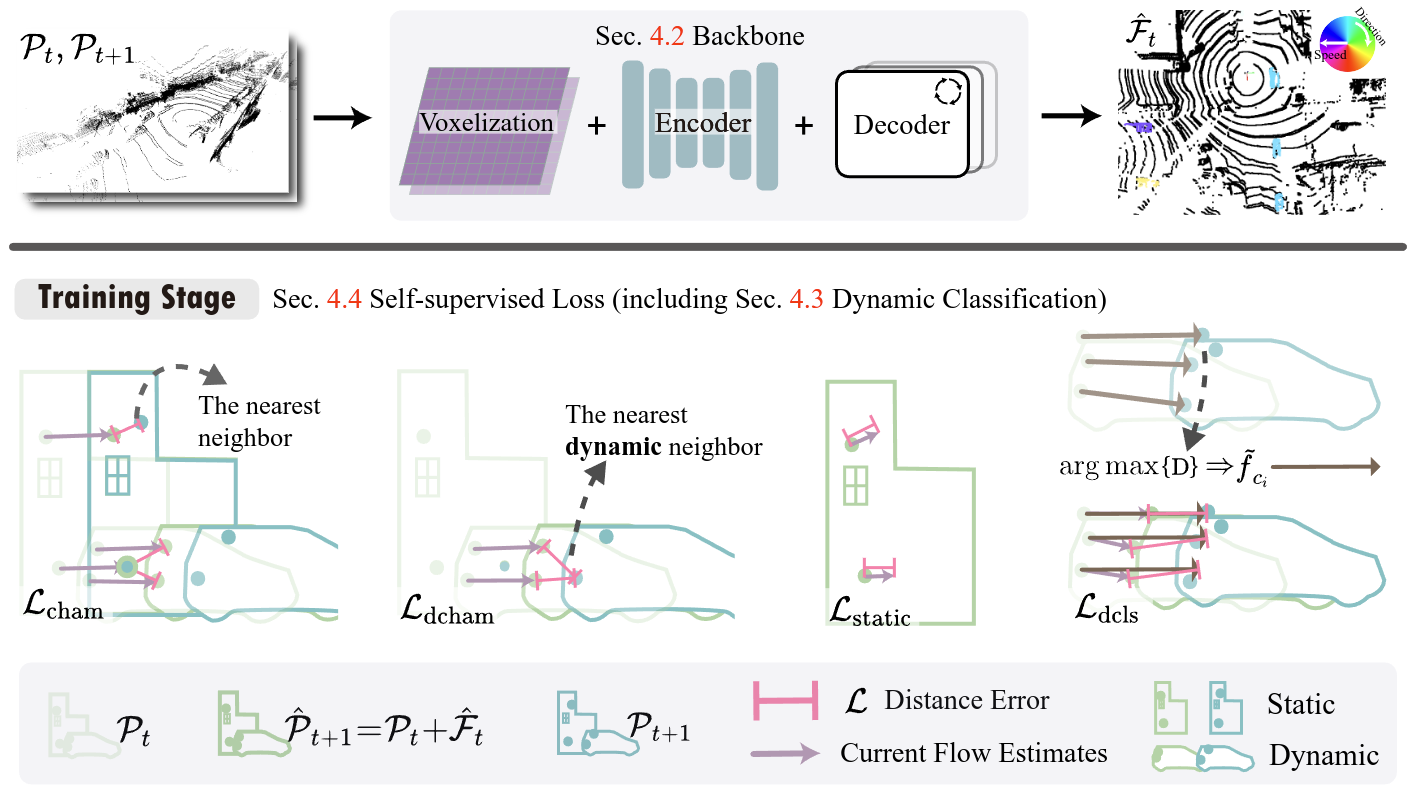

| Jul 01, 2024 | Our SeFlow: A Self-Supervised Scene Flow Method in Autonomous Driving paper is accepted by ECCV 2024. 🎉 The 1st ranking on Argoverse 2 Self-supervised scene flow leaderboard! |

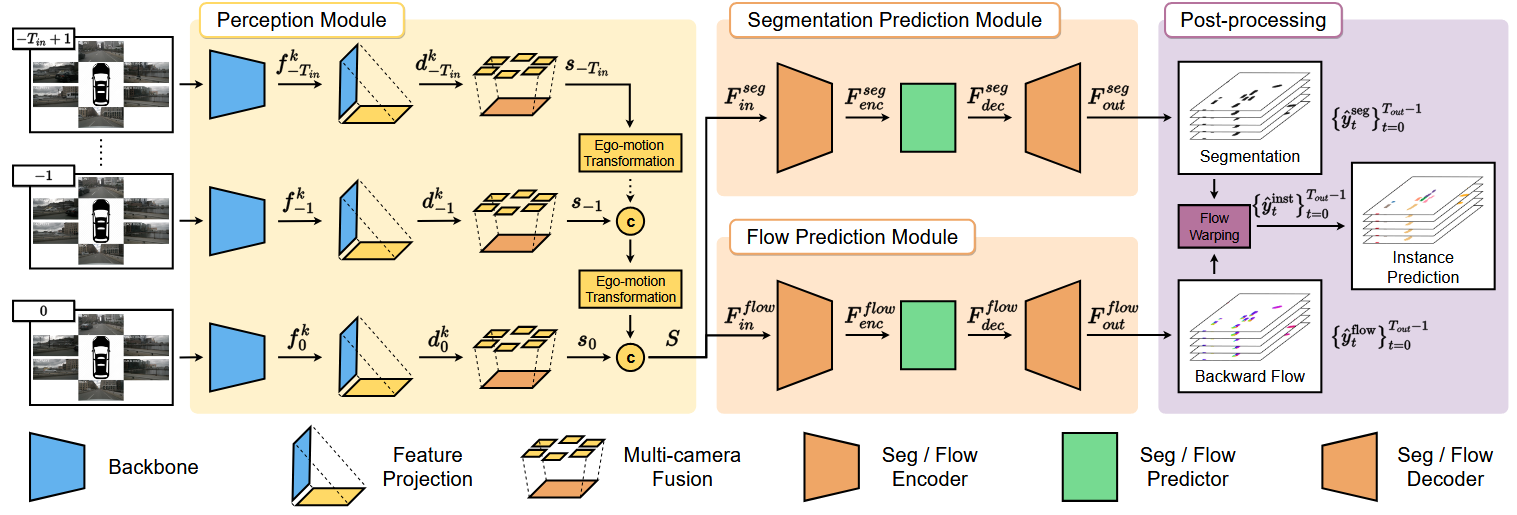

| Apr 19, 2023 | PowerBEV, our paper on camera-based end-to-end instance prediction in bird’s-eye view, has been accepted by IJCAI 2023. 🎉 |

03 · Publications

Publication highlights

- CVPR 2026

SpaceDrive: Infusing Spatial Awareness into VLM-based Autonomous DrivingInfusing explicit spatial representations into vision-language models for robust autonomous driving with 3D spatial reasoning.IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026

SpaceDrive: Infusing Spatial Awareness into VLM-based Autonomous DrivingInfusing explicit spatial representations into vision-language models for robust autonomous driving with 3D spatial reasoning.IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026

04 · Writing

Research notes

| Dec 24, 2025 | SpaceDrive:Infusing Spatial Awareness into VLM-based Autonomous Driving |

|---|---|

| Dec 15, 2025 | In the Perspective of Manifold Hypotheses - 2 |

| Nov 25, 2025 | In the Perspective of Manifold Hypotheses - 1 |

05 · Contact & positions